Mirrors, Machines and Memory: What Sufism Thought Me About AI

Through Sufi philosophy's mirror metaphor: what gets lost when imperfect humans create AI? On the race to build something smarter than us.

I was listening to Tristan Harris on the Diary of a CEO podcast yesterday, and it sparked my curiosity about the AI race. He was talking about how companies are racing to build uncontrollable AI and what kind of future is ahead of us. I see that people are divided into two sides when it comes to the AI debate: those who see salvation and those who see extinction. Companies are investing a huge amount of money into AI to get ahead in this AI race, saying “if we don’t do it, they will” to rationalize their actions.

But what is this race all about? Finding solutions to every human problem, like curing cancer or making the world a better place? Or destroying the world by creating the most intelligent thing ever and not being able to control it at all? People are either on one side or the other. The answer, though, is not so simple.

When GPT-3 came out, it blew everybody’s mind about what AI could do. It helped with coding bugs, created fantastic reports for companies, and helped with school presentations. That was all great, but it didn’t show AI’s real potential, did it? Companies and governments started to discover that AI could do more than just help you write a 1000 word blog post. It can help detect cancer cells in mammogram results, help surveil citizens, or even kill people by targeting them automatically. It’s all so powerful, yet we’re not at AI’s full potential today.

The most important breakthrough hasn’t happened yet (Harris also mentioned this in the podcast): Recursive Self-Improvement. Imagine AI being aware of itself, recognizing its own weaknesses to complete a task at hand, and improving on those weaknesses. After this happens, which some experts expect that it could happen within 2 to 10 years, the world will change dramatically. Big companies leading the AI race, especially in the USA, believe this change is inevitable. On the other hand, critics say these AI labs should be regulated and companies should make sure AI is safe and won’t harm people. There’s an existential crisis for humanity in the shadow of AI.

Here, I raise two questions: Why would AI likely be more harmful than good? And is “controlling AI” even the real problem?

Let’s look at the first question: If people are creating AI, why would it become potentially bad? Does that mean people are bad?

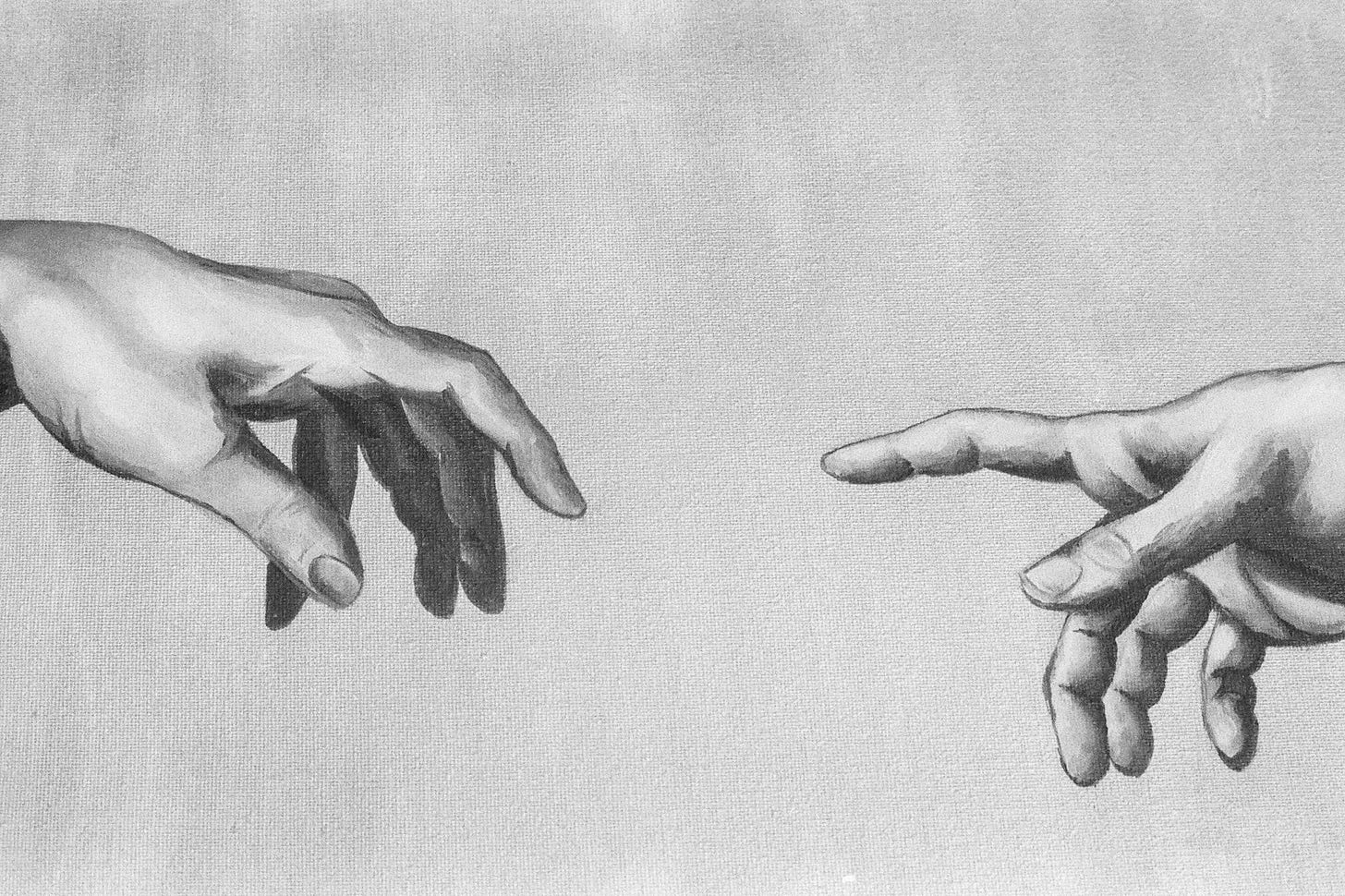

This question reminded me of the Sufi perspective on the creation of the world. They use a “mirror metaphor” to explain that creation is like a reflection of God. It appears inverted or reversed, which is why what is perfect and purely good in God can appear as imperfect or containing evil in the created world. I think what humans are trying to create with AI is similar to this analogy, with one key difference: God is perceived as perfect, but humans are not. We contain the capacity for both deep love and unspeakable cruelty.

Now we’re creating AI, a reflection of a reflection. Think about it: we’re creating something in our image, and we get to choose what to reflect. We can code in our problem solving abilities and our logic. But can we code in empathy? Grief? The ability to know that some things matter even when they don’t make logical sense?

The question isn’t whether AI will be “good” or “bad” but rather, what aspects of humanity are we coding into it, and what are we leaving out?

Now for the second question: Is “controlling AI” even the real problem? Let’s step back and think: why do we want to control AI? Because it can be harmful. But harmful to whom? Humans, right?

In the world we live in, we, humans, are the smartest of all creatures, at least until AI becomes self-aware and improves itself on its own. Then there are animals, which we sometimes use as a food source, make into shoes and coats, or love and adopt. We’re able to do that because we’re smarter than them. So what happens when something even smarter than us comes along? Do we become the “animals” of this world? Why would the world need humans anyway? I think this question is deeply shaking our most fundamental instinct: to be alive. No matter what, we want to live and we want to exist. That’s why some people see AI as an existential threat to humanity.

Humans may not be the smartest in the world anymore, but we have emotions, consciousness, and ethics that AI, we believe, can only mimic. I’m living in Amsterdam where the housing is a huge problem. I recently visited a cemetery with my boyfriend to see the graves of his grandparents and great-grandparents. If AI ran the city of Amsterdam, what would stop it from building houses on cemeteries and just burning all the dead bodies? From a pure logical perspective, this could make sense on paper while completely missing what makes Amsterdam Amsterdam: the layers of memory, grief, tradition, and meaning that can’t be quantified.

So where does this leave us?

This is the hardest part of the whole article. And honestly, I am not sure.

I believe in the world we’re living in, we need to hold onto our “human side” even more. Critical thinking and decision-making are more important than solving mathematical problems or knowing how to write an if statement in Python. We should be even more aware of our biases, the ones that make us blind to some facts or shape our thoughts about others.

I know we might not have time to figure this out before AI surpasses us. But I believe that a better future is possible with AI, only if we collectively work towards improving ourselves while the race is happening, not after it’s already over.

Everything you just spoke about, including the Sufi perspective, is vividly reflected in '2001: A Space Odyssey'