They say AI is for Everyone. But It Thinks in English

AI tokenizers make English the cheapest language to process. Discover the hidden language tax non-English speakers pay, and why it matters for AI fairness.

Recently, I came across an app that helps non-English speakers build apps in minutes.

When I looked at their About section, one sentence stood out:

“We want to democratize app creation for people who don’t speak English.”

I genuinely liked that mission.

But it also made me think.

Because if that’s the goal, then, what exactly is broken in the current system, and what does “democratizing AI” actually mean?

How AI Tokenization Actually Works

Before we talk about fairness, access, or democratization, we need to talk about something much more basic: how large language models process text at all.

If you’ve ever worked with LLMs before, you probably know this:

AI doesn’t understand words.

It doesn’t understand characters.

It understands tokens.

And almost everything — pricing, limits, latency — is based on them.

Tokenizers break text into pieces that models can work with and tokenizers are not neutral.

Why English Is the Cheapest Language for AI

English has a “superpower”: it expresses meaning using very few tokens.

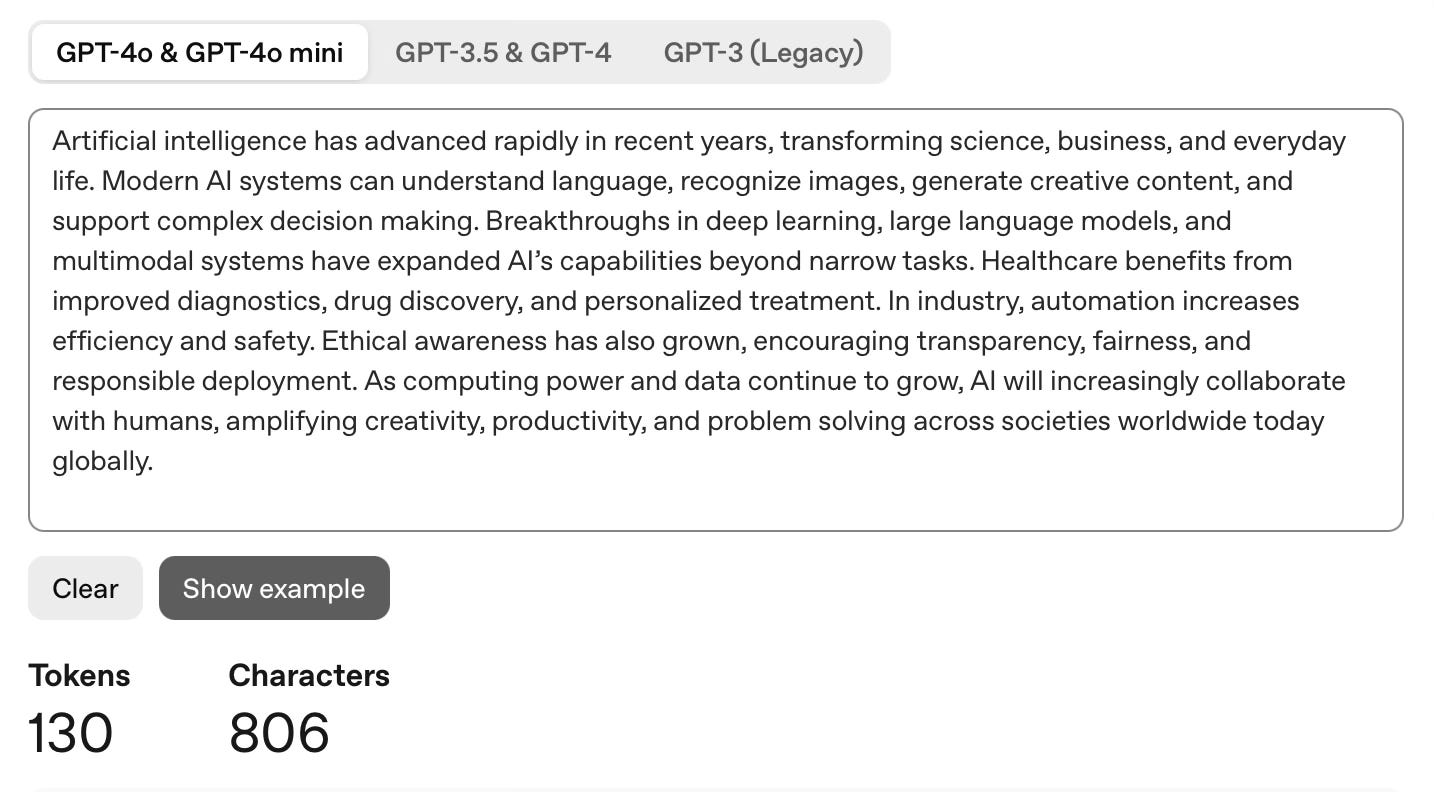

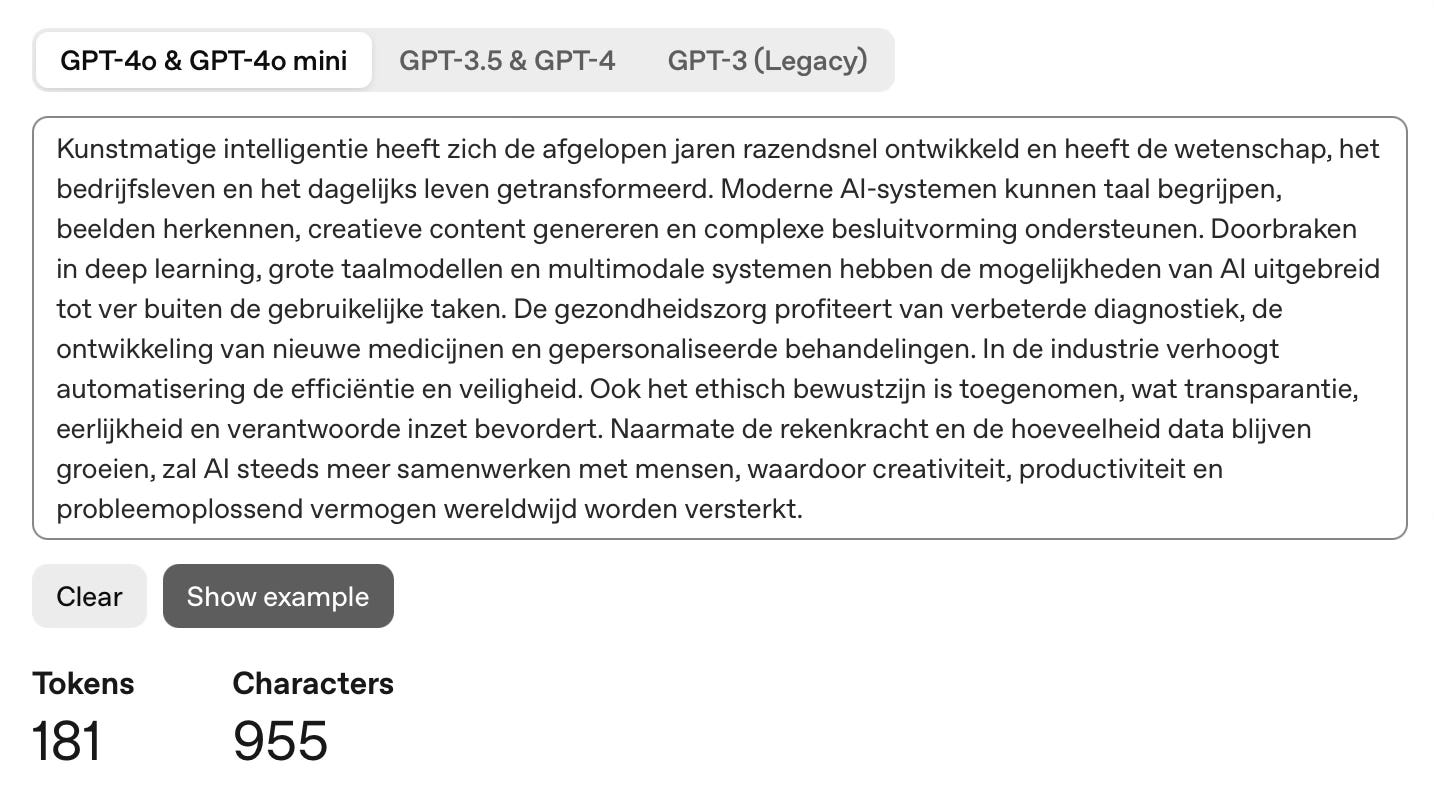

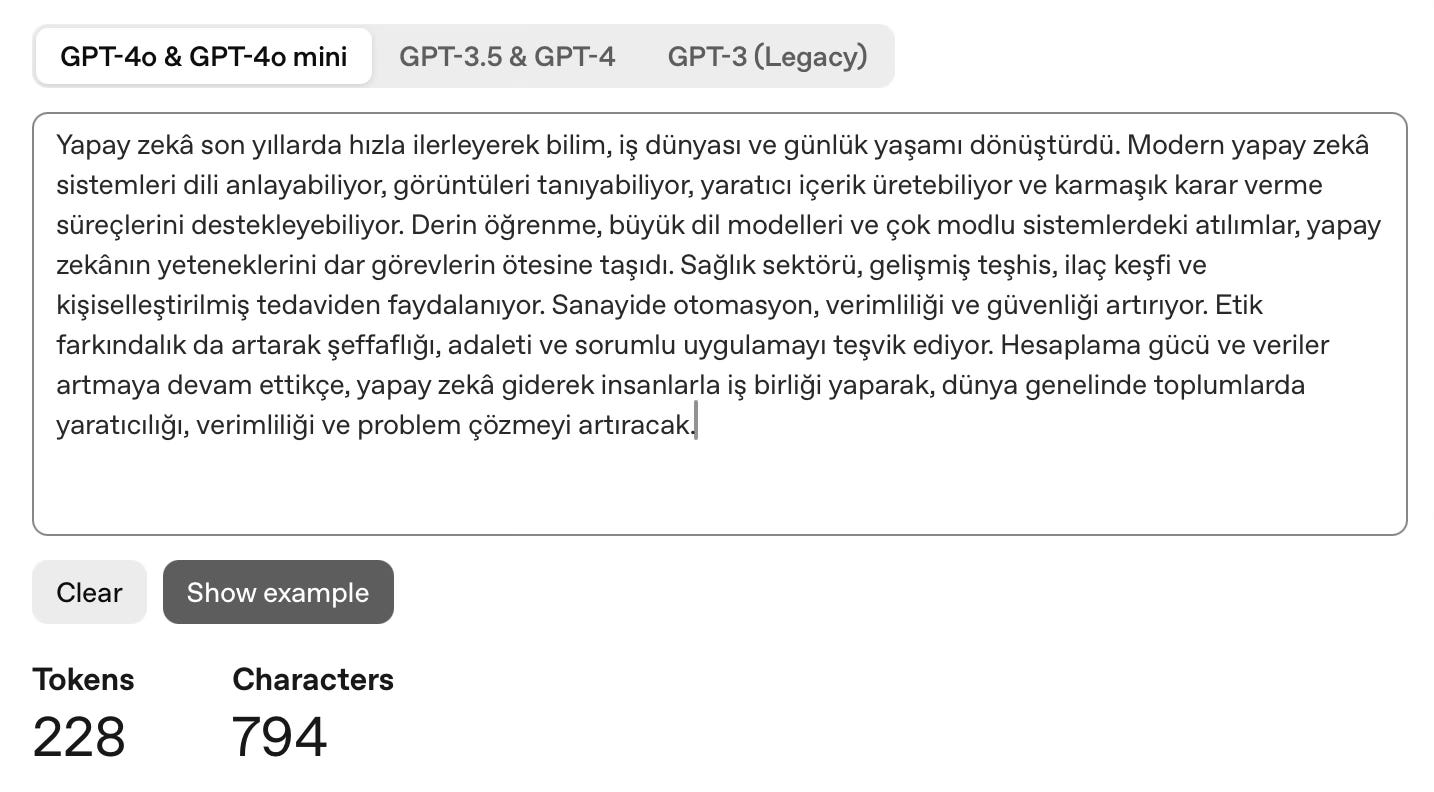

To make this concrete, I took the same content and wrote it in three languages that I am most familiar with:

English

Dutch

Turkish

Then I ran them through the same tokenizer (https://platform.openai.com/tokenizer) .

Here’s what came out:

Same idea, same intent, same information. Very different token counts.

The result is actually not random:

Dutch uses a lot of compound words, which tokenizers often split apart.

Turkish is an agglutinative language, where meaning is built by stacking suffixes, and each stack adds more tokens.

English, on the other hand, gets a smooth ride.

This Isn't a Bug. It's How Tokenizers Are Designed

It’s easy to call this unfair or broken. But technically speaking, nothing is “wrong“.

English dominates high-quality training data.

So tokenizers are optimized for English frequency patterns.

That means:

common English words → single tokens

common English suffixes → single tokens

common English constructions → efficiently compressed

As a result:

English expresses meaning with fewer tokens than almost any other natural language.

The system is doing exactly what it was designed to do.

That means, English is the cheapest language to think in.

The Hidden Language Tax in AI Pricing

Most AI pricing today is token-based, directly or indirectly.

Which means non-English users hit limits faster, burn their credits sooner, or become “expensive” users without realizing why.

And none of this is visible at the UI level.

From the outside, everything looks inclusive.

From the inside, the system is quietly optimized around one language.

English dominates AI not only because of data, but because most large AI systems are built in the United States, where English is the default language for research, infrastructure, and early users.

That default shapes what gets optimized and what becomes expensive. Other languages work, but they are not the center of the system.

This is why it matters for other countries (especially non-English speaking ones) to build their own LLMs: not to replace global models, but to ensure their languages, costs, and ways of thinking are first-class citizens rather than afterthoughts.

If AI systems:

think most cheaply in English

price based on tokens

and scale globally

Then we have to ask:

Who is actually paying the language tax?

Is it the user?

The company?

Or the people who never get to build at all?

Because real democratization will start when we design systems that don’t punish people for the language they think in.

And right now, we’re not quite there.

the "language tax" framing is sharp. it's invisible at the UI layer, which is exactly why it's easy to declare AI "democratized" while the infrastructure underneath is still heavily English-first.

though i'd push back a bit on the "build your own LLM" path as the solution — that's only accessible to a handful of countries with the capital, compute, and talent (China, France, UAE, maybe Japan). for everyone else, that bar is unreachable.

the more tractable fix might actually be at the tokenizer level — train tokenizers on balanced multilingual corpora rather than English-dominant ones. DeepSeek, for instance, has a tokenizer optimized for Chinese, which changes the economics without requiring every country to build from scratch. curious if you see that as viable, or do you think the data imbalance runs too deep to fix from the tokenizer up?

This is such an important point. We talk about ‘democratizing AI’ but rarely about the hidden costs baked into the system itself